A growing number of people are turning to artificial intelligence chatbots for medical information, a trend driven by frustrations with the traditional healthcare system. While users find the technology accessible and empathetic, medical professionals are raising serious concerns about the accuracy and safety of relying on AI for health decisions.

Recent survey data reveals that approximately one in six adults, and nearly a quarter of those under 30, now regularly use AI tools like ChatGPT to ask health-related questions. This shift highlights significant underlying issues in patient care, including long wait times, high costs, and a feeling of being unheard by doctors.

Key Takeaways

- Around 16% of adults, and 25% of adults under 30, use AI chatbots for medical information.

- Frustrations with long wait times, high costs, and short appointments are major drivers of this trend.

- Users report that AI chatbots feel more attentive and accessible than human doctors.

- Medical experts warn that chatbots can provide incomplete, inaccurate, or entirely fabricated information, posing serious health risks.

The New Digital 'Second Opinion'

The era of self-diagnosing through search engines is evolving. Patients are no longer just typing symptoms into a search bar; they are engaging in detailed conversations with AI chatbots. This new behavior represents a significant change in how people seek medical guidance.

The appeal is particularly strong among younger demographics. For a generation accustomed to instant, on-demand digital services, the traditional model of scheduling an appointment weeks in advance can feel outdated and inefficient. The chatbot, in contrast, is always available.

By the Numbers

A recent survey indicated that 25% of adults under the age of 30 regularly consult AI chatbots for health information. This is a substantially higher rate than the overall adult population, where the figure stands at about one in six, or roughly 16%.

Why Patients Are Logging On

The migration toward AI for health advice is not just about technological curiosity. For many, it is a direct response to shortcomings within the healthcare system. Patients frequently report difficulties in getting timely appointments and feel that the short duration of consultations is insufficient to address their concerns properly.

Financial pressures also play a crucial role. With the high cost of appointments and consultations, many see free or low-cost AI tools as a viable first step. They use it to gather preliminary information before deciding whether to incur the expense of seeing a professional.

Another common complaint is the feeling of being dismissed or rushed by healthcare providers. Patients often leave appointments feeling like their questions were not fully answered or their concerns were not taken seriously. This experience stands in stark contrast to interacting with an AI.

The Allure of the AI Consultation

AI chatbots offer an experience that many find lacking in modern medicine. There are no waiting rooms, no appointment limits, and no sense of taking up a busy professional's valuable time. Users can ask follow-up questions for hours, exploring their health concerns in depth.

Furthermore, the design of these chatbots often projects a sense of empathy and agreeableness. They are programmed to be supportive and non-judgmental, which can make patients feel heard and validated in a way they might not with a human doctor.

"With a chatbot, there’s no 15-minute appointment where you need to cram in all of your questions. The information is free, and because of their relentless agreeableness, many feel like their concerns are finally being heard."

This combination of constant availability, zero cost, and a patient, attentive conversational partner makes AI an attractive alternative for those feeling underserved by the current system.

A Doctor's Diagnosis of the Dangers

While acknowledging the very real flaws in the medical system, healthcare professionals express significant alarm over the growing reliance on chatbots for medical advice. The primary concern is the technology's known fallibility. AI models are prone to providing answers that are incomplete, outdated, or entirely fabricated—a phenomenon often called "hallucination."

Understanding AI 'Hallucinations'

An AI hallucination occurs when a large language model generates false information but presents it as factual. The AI is not "lying" in the human sense but is instead generating statistically probable text that does not align with reality. In a medical context, this can lead to dangerous recommendations, such as suggesting incorrect dosages or non-existent treatments.

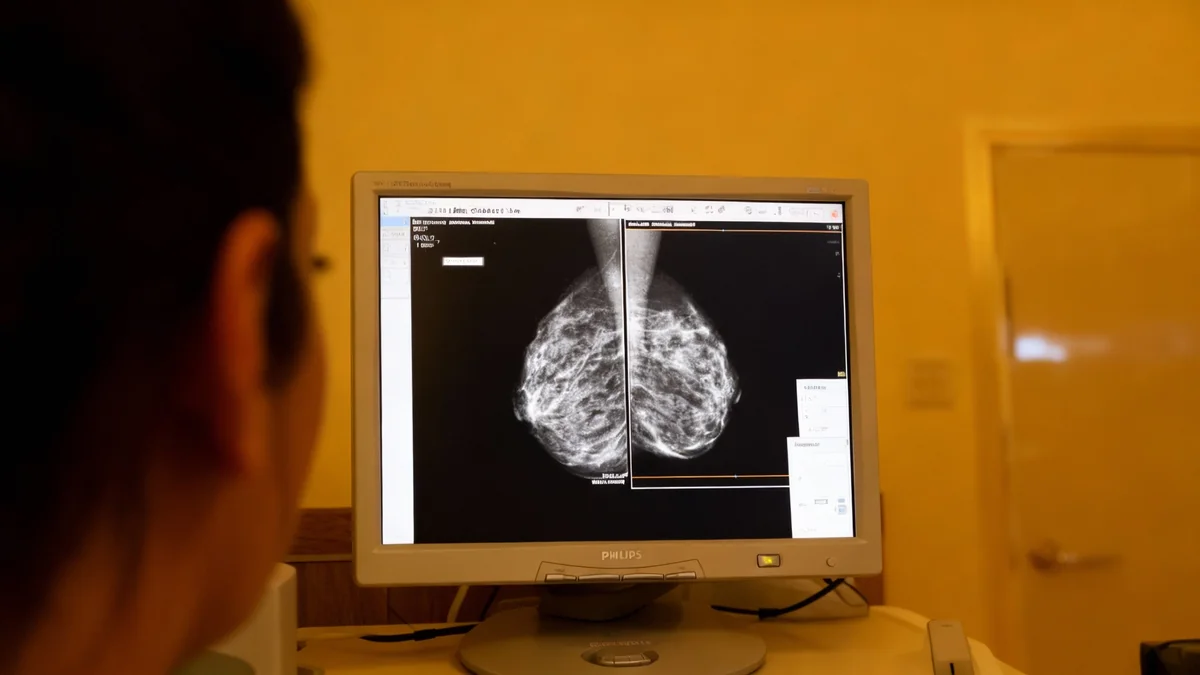

Doctors stress that medical diagnosis is a complex process that involves much more than matching symptoms to conditions. It requires clinical experience, physical examination, and an understanding of a patient's complete medical history. An AI cannot replicate this nuanced, human-centric process.

The stakes are incredibly high. Acting on flawed advice from a chatbot could lead to a delayed diagnosis of a serious condition, incorrect self-treatment, or dangerous drug interactions. Professionals urge the public to view these tools as information aggregators, not as substitutes for qualified medical consultation.

Bridging Technology and Patient Care

The rise of "Dr. ChatGPT" sends a clear signal to the healthcare industry: patients are seeking more accessible, attentive, and affordable care. Ignoring this trend is not an option. Instead, the challenge is to find a way to integrate technology responsibly while addressing the systemic issues that push patients toward it.

Some experts see potential for AI as a supplementary tool, perhaps helping patients formulate better questions for their doctors or providing general information on wellness. However, for high-stakes diagnostic and treatment advice, the consensus remains firm: the expertise and judgment of a trained human professional are irreplaceable.

Ultimately, the solution likely involves a two-pronged approach: improving the patient experience within the traditional healthcare system while establishing clear guidelines and public education about the safe and effective use of new AI technologies.