OpenAI CEO Sam Altman has addressed the growing concerns over the significant energy consumption of artificial intelligence by drawing a direct comparison to the resources required to raise and educate a human being. Speaking at a major AI summit in India, Altman framed the debate around energy efficiency, suggesting that on a per-task basis, AI may already be more efficient than a person.

The comments come at a time of intense scrutiny over the environmental footprint of large-scale AI models, which require vast data centers and immense electrical power to operate. Altman's perspective attempts to reframe this cost by contrasting it with the biological and societal energy invested in human intelligence over millennia.

Key Takeaways

- Sam Altman described criticism of AI energy use as "unfair" during an AI summit in India.

- He compared the energy for training AI models to the 20 years of resources needed for a human to "get smart."

- Altman argued that for answering a single question, AI is likely already more energy-efficient than a human.

- The discussion highlights the broader debate about the environmental impact of the rapidly expanding AI industry.

A New Perspective on Energy Costs

During a question-and-answer session, OpenAI's chief executive was asked about the substantial natural resources demanded by generative AI. He pushed back against the premise, offering a novel comparison to contextualize the energy expenditure.

"It also takes a lot of energy to train a human," Altman stated. "It takes, like, 20 years of life and all of the food you eat during that time before you get smart."

He expanded on this idea, referencing the cumulative energy of human evolution as a prerequisite for modern human intelligence. Altman suggested that a fair comparison should focus on the energy cost of a single task performed by a trained model versus a human.

"The fair comparison is, if you ask ChatGPT a question, how much energy does it take once its model is trained to answer that question, versus a human?" he proposed. "And probably, AI has already caught up on an energy-efficiency basis, measured that way."

The Broader AI Energy Debate

Altman's comments land in the middle of a critical global conversation about the sustainability of the AI boom. The development and operation of foundational models like those from OpenAI require massive computational power, which translates directly into high electricity consumption.

The Infrastructure Behind AI

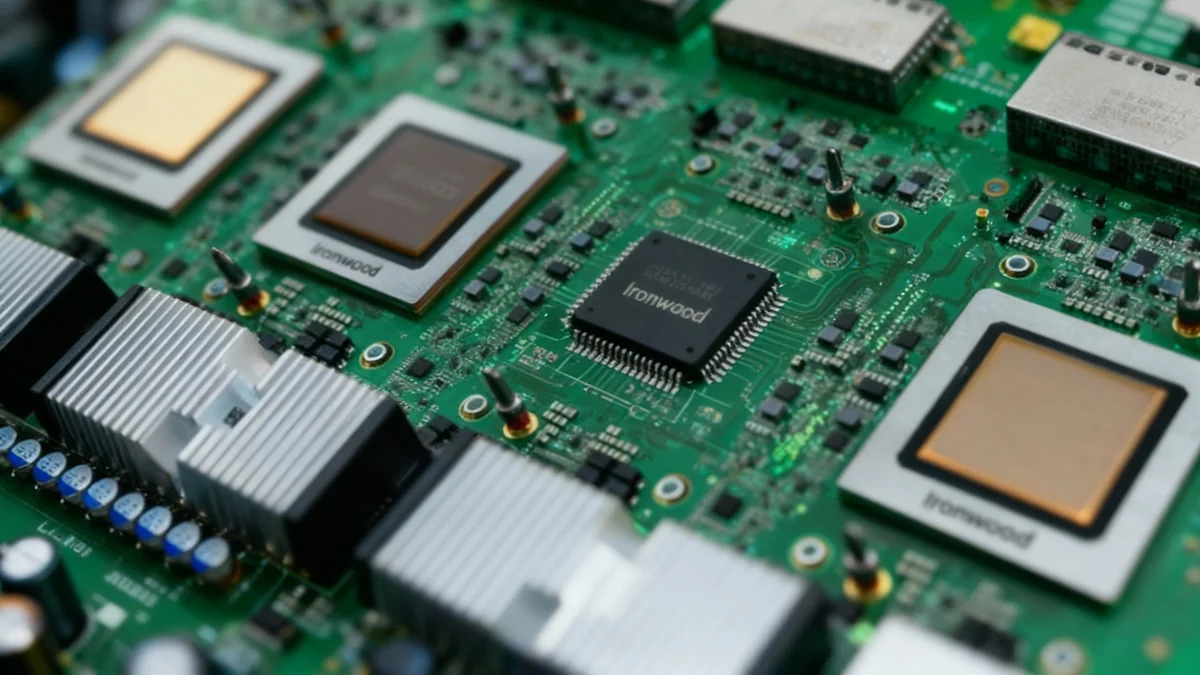

Large language models are trained and run in specialized data centers filled with thousands of high-performance processors. These facilities consume electricity on the scale of small cities, not only to power the computers but also for the extensive cooling systems required to prevent them from overheating.

Critics of the industry point to this energy demand as a significant and growing contributor to carbon emissions. As AI becomes more integrated into daily life and business operations, the need for more powerful models and the data centers to support them is expected to grow exponentially.

This has led to concerns about the strain on electrical grids and the potential for the tech industry to reverse progress made in reducing global carbon footprints. Some reports indicate that data centers are extending the operational life of fossil fuel power plants to meet their energy needs.

The Human Brain vs. The AI Model

While Altman's comparison is thought-provoking, the direct energy use of the human brain is remarkably low. The brain operates on approximately 20 watts of power, a fraction of what is required to run even a single high-end GPU used for AI training.

The human brain consumes about 20% of the body's total energy at rest, despite making up only 2% of its weight. This level of efficiency for complex thought and problem-solving is something current technology cannot replicate.

The calculation becomes more complex when considering the entire ecosystem. A user interacting with an AI model uses a device like a smartphone or laptop, which consumes power. That device connects via networks to a data center, where the model itself is running on powerful servers. The total energy cost of a single query is therefore distributed across multiple systems.

Altman's argument focuses on the output—the answer to a question—rather than the total energy input required to build and maintain the system capable of providing that answer.

The Push for Power

The demand for energy is a central challenge for companies like OpenAI. Reports have highlighted ambitious projects, such as the conceptual "Stargate" data center, which would require its own dedicated power source, potentially consuming gigawatts of electricity.

The industry's growth is directly tied to its ability to secure massive amounts of power. This has led to tech companies becoming some of the largest energy consumers in the world. Some are investing heavily in renewable energy sources to power their operations, but the scale of demand often outpaces the availability of green energy.

- Data Center Growth: The number and size of data centers are increasing globally to support AI development.

- Fossil Fuel Reliance: In some regions, this growth has led to increased reliance on natural gas and even coal to ensure a stable power supply.

- Corporate Responsibility: Major tech firms are under pressure to be transparent about their energy consumption and to invest in sustainable solutions.

As the capabilities of AI continue to advance, the conversation around its environmental and societal costs is becoming more urgent. Sam Altman's attempt to reframe the energy debate underscores the industry's awareness of this criticism, even as it continues its rapid expansion. The ultimate balance between technological progress and environmental sustainability remains one of the defining challenges of the AI era.