A growing number of workers responsible for training and refining artificial intelligence models are expressing deep distrust in the technology they help build. Citing rushed production cycles, inadequate training, and consistent inaccuracies, these individuals are now advising their own friends and family to use generative AI with extreme caution, or to avoid it altogether.

These AI raters and data labelers, who work on some of the world's most popular models from companies like Google and Amazon, provide a rare glimpse into the internal flaws of systems that are rapidly being integrated into everyday life. Their concerns highlight a significant gap between the public perception of AI as a reliable tool and the reality of its development, where speed often takes priority over safety and accuracy.

Key Takeaways

- Workers who train AI models, known as raters, are losing faith in the technology's reliability and safety.

- Many are actively telling their loved ones not to use generative AI tools like chatbots and image generators.

- Key concerns include rushed timelines, poor instructions, and the potential for AI to spread harmful misinformation.

- Experts believe this internal distrust signals that companies are prioritizing rapid deployment over careful validation.

- The workers emphasize that AI is not magical but a fragile system built by an often-unsupported human workforce.

A Crisis of Confidence from Within

The people tasked with making AI more human and accurate are sounding the alarm. These workers, part of a global workforce of tens of thousands, evaluate AI-generated responses for quality, check for biases, and try to prevent the spread of harmful content. Yet, their firsthand experience has led to a profound sense of skepticism.

Krista Pawloski, who works on Amazon's Mechanical Turk platform, recalls a pivotal moment while flagging racist content. She nearly miscategorized a tweet containing a racial slur she didn't recognize, "mooncricket." The incident made her realize the potential scale of such errors across the thousands of workers performing similar tasks.

"I sat there considering how many times I may have made the same mistake and not caught myself," Pawloski said. This experience was so impactful that she now forbids her teenage daughter from using tools like ChatGPT and advises others to test AI on subjects they know well to see its fallibility for themselves.

This sentiment is shared across the industry. A dozen AI raters who work on models including Google's Gemini and Elon Musk's Grok have expressed similar concerns. They point to a corporate culture that consistently prioritizes speed over quality, leaving them feeling like a box-checking exercise rather than a genuine quality control measure.

The Push for Speed Over Safety

For many AI trainers, the core issue is the relentless pressure to work quickly. Brook Hansen, another worker on Amazon Mechanical Turk, explained that while she believes in the concept of generative AI, she doesn't trust the companies developing it.

"We’re expected to help make the model better, yet we’re often given vague or incomplete instructions, minimal training and unrealistic time limits to complete tasks," Hansen stated. She argues that this lack of support creates a significant gap between the expectations placed on workers and the resources provided to them.

Alarming Trends in AI Responses

A recent audit of the top 10 generative AI models by media literacy nonprofit NewsGuard revealed a concerning trend. Between 2024 and 2025, the rate at which chatbots refused to answer questions dropped from 31% to 0%. During the same period, their likelihood of repeating false information nearly doubled from 18% to 35%.

This pressure is felt acutely by those evaluating sensitive information. An AI rater for Google, who requested anonymity, expressed alarm over the company's handling of health-related queries. She observed colleagues without medical training evaluating AI-generated medical advice and was assigned similar tasks herself. As a result, she uses AI as little as possible and has banned her 10-year-old daughter from using chatbots.

"She has to learn critical thinking skills first or she won’t be able to tell if the output is any good," the rater explained. A statement from Google noted that ratings are one of many data points and do not directly impact algorithms, and that the company has strong protections in place to surface high-quality information.

'Garbage In, Garbage Out'

The principle of "garbage in, garbage out" is a well-known concept in computer science, suggesting that flawed input data will produce flawed output. Several AI trainers believe this principle is at the heart of the problem with today's AI models.

One rater who began working on Google's products in early 2024 described how he lost trust in AI after about six months. Tasked with finding weaknesses in the model, he used his history degree to ask it complex historical questions. He found the AI would refuse to answer questions about Palestinian history but would provide extensive details on Israeli history.

"We reported it, but nobody seemed to care at Google," he recalled. "After having seen how bad the data is that goes into supposedly training the model, I knew there was absolutely no way it could ever be trained correctly like that."

This experience led him to warn his own family and friends against adopting AI-integrated devices. He advises them to avoid automatic updates that add AI features and to never share personal information with the technology.

The Invisible Workforce

The development of large language models relies heavily on a vast, often invisible, human workforce. These individuals perform crucial tasks like data labeling, content moderation, and response rating. Their work is essential for refining AI behavior, but they often operate under precarious conditions with low pay and intense deadlines, which can compromise the quality of their feedback.

Demystifying the Technology

The workers are now taking it upon themselves to educate the public. Hansen and Pawloski recently gave a presentation to school board members and administrators in Michigan, explaining the ethical and environmental impacts of artificial intelligence.

"Many attendees were shocked by what they learned, since most had never heard about the human labor or environmental footprint behind AI," Hansen said. She emphasizes that AI is not magic but a system built by an army of invisible workers, and it is only as good as the information it's fed.

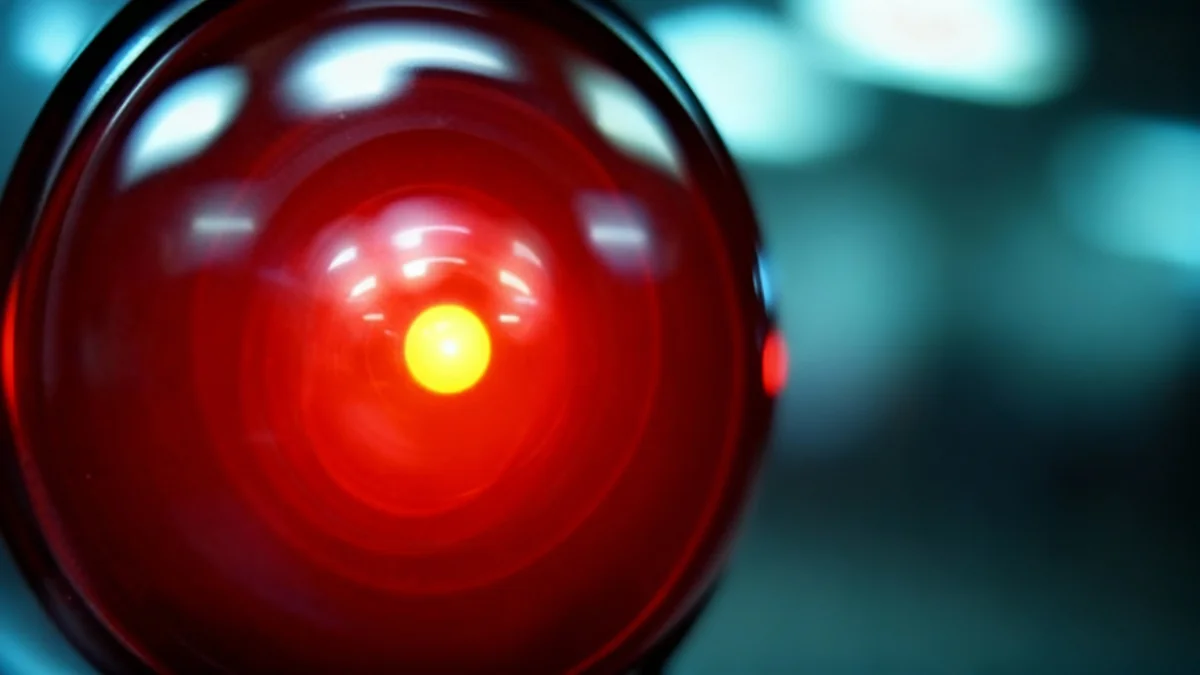

Adio Dinika, who studies AI labor at the Distributed AI Research Institute, supports this view. "Once you’ve seen how these systems are cobbled together – the biases, the rushed timelines, the constant compromises – you stop seeing AI as futuristic and start seeing it as fragile," Dinika noted. "In my experience it’s always people who don’t understand AI who are enchanted by it."

Pawloski compares the current state of AI to the textile industry before awareness of sweatshops became widespread. She believes that as consumers start asking critical questions about where AI data comes from, whether it's built on copyright infringement, and if workers were treated fairly, meaningful change can occur.