In a series of remarkable scientific advancements, researchers are now using artificial intelligence to translate complex brain activity directly into text, synthesized speech, and even visual images. These breakthroughs offer new hope for individuals who have lost the ability to communicate and provide an unprecedented window into the human mind.

Studies from institutions like Stanford University and the University of California, Davis, are demonstrating how brain-computer interfaces (BCIs) can interpret the neural signals associated with imagined speech, effectively giving a voice to the voiceless. The technology is rapidly moving beyond simple text to capture the nuances of human expression.

Key Takeaways

- AI-powered brain-computer interfaces are successfully converting the thoughts of paralyzed individuals into real-time text.

- New systems can decode not only words but also the emotional tone, pitch, and rhythm of speech.

- Separate research is using AI to reconstruct visual images and music directly from brain scans.

- These technologies rely on surgically implanted microelectrodes and non-invasive fMRI scans combined with advanced machine learning algorithms.

A New Voice for Paralyzed Patients

For individuals with conditions like amyotrophic lateral sclerosis (ALS) or who have been paralyzed by a stroke, communication is a profound challenge. Recent research highlights how BCIs are changing this reality. In one study at Stanford University, a 52-year-old woman who had been unable to speak for nearly two decades due to a stroke was able to generate sentences on a computer screen simply by thinking them.

The system involved a small array of electrodes surgically placed on her brain. As she imagined saying words, an AI algorithm decoded the corresponding neural signals and translated them into text. This process, known as decoding "inner speech," represents a significant leap forward from earlier methods that required patients to attempt physical speech movements.

In a similar trial at the University of California, Davis, a 45-year-old man with ALS achieved a communication speed of approximately 32 words per minute with 97.5% accuracy using a BCI that translated his attempted speech into text.

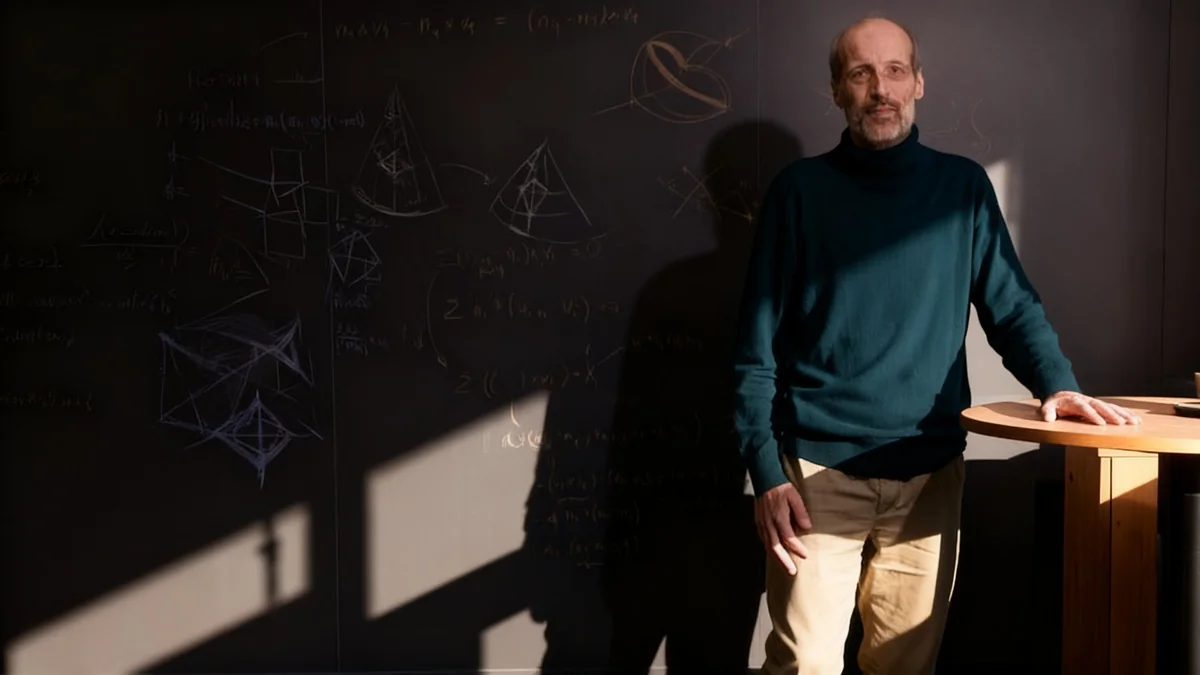

Frank Willett, a researcher at Stanford's Neural Prosthetics Translational Laboratory, noted that while the technology is still developing, it can clearly pick up traces of inner speech. For specific tasks, such as imagining a sentence, the system achieved an accuracy rate of up to 74% in real time.

The Science Behind Thought Translation

The technology driving these advancements is a sophisticated blend of neuroscience and artificial intelligence. It begins with brain-computer interfaces, which establish a direct pathway between the brain's electrical activity and an external device.

How Brain-Computer Interfaces Work

Most of the high-performance systems currently in development use microelectrode arrays. These tiny devices are surgically implanted onto the surface of the brain, typically in the motor cortex—the region responsible for muscle movement.

Here's a simplified breakdown of the process:

- The electrodes detect the electrical signals fired by hundreds of individual neurons.

- These signals are sent to a computer.

- A machine learning algorithm, trained on vast amounts of data, recognizes the unique patterns of neural activity associated with specific phonemes (the basic sounds of language).

- The algorithm then assembles these phonemes into words and sentences.

Maitreyee Wairagkar, a neuroengineer at UC Davis, compared the process to how a smart assistant like Amazon's Alexa interprets sound, but in this case, the AI is interpreting neural signals instead.

"In our brains, we have billions of neurons and trillions of connections," Wairagkar explained. "We were sampling just 256 of those. Newer devices and better technology will be able to sample more neurons, get richer information, and achieve real time intelligible speech."

Beyond Words: Capturing Emotion and Tone

Human communication is far more than the words we use. Tone, pitch, and rhythm convey emotion and context, aspects that have been missing from text-based BCI systems. However, Wairagkar's lab at UC Davis recently made a pivotal breakthrough in this area.

Their latest prototype demonstrated the ability to not only decode words but also to reconstruct the non-verbal elements of speech. A participant with a severe motor speech disorder was able to use the system to produce synthesized speech out loud.

Crucially, he could modulate his voice to convey different meanings. "Our participant was able to ask a question with an inflection at the end of the sentence, and to change his pitch while speaking," said Wairagkar. In one task, he was even able to use the BCI to sing melodies. While the system's output was judged to be 60% intelligible, it marks a critical step toward creating more natural and expressive communication tools for those who need them.

Decoding What We See and Hear

While some researchers focus on speech, others are using similar AI techniques to decode sensory experiences. Scientists in Japan have developed a "mind captioning" method that can generate descriptions and even recreate images of what a person is seeing.

Recreating Images from Brain Scans

This research uses functional magnetic resonance imaging (fMRI), a non-invasive technique that measures brain activity by tracking blood flow. Participants are shown thousands of images while in an fMRI scanner. An AI model, such as Stable Diffusion, is then trained to associate patterns of brain activity with the visual content of the images. The result is an AI that can generate a surprisingly accurate visual representation of what a person is viewing.

Yu Takagi, an associate professor at the Nagoya Institute of Technology, explained that these studies have revealed how different parts of the brain process visual information. The occipital lobe handles low-level details like color and layout, while the temporal lobe processes high-level concepts, such as identifying an object.

Similar efforts are underway to reconstruct audio. Takagi's team used an algorithm to reproduce pieces of music from fMRI scans of subjects who were listening to them. Though more challenging than image reconstruction, the AI was able to capture the basic character and category of the music.

The Future of Brain-Computer Interfaces

The progress in decoding brain signals is accelerating rapidly. Researchers believe that increasing the number of electrodes in implants will lead to richer data and more accurate, real-time speech translation. There is also interest in exploring other brain regions beyond the motor cortex, such as the superior temporal gyrus, which is involved in auditory processing and could be key to improving inner speech decoding.

The potential applications are vast. Beyond restoring communication, this technology could be used to study psychiatric conditions by visualizing hallucinations, understand how animals perceive the world, or even reconstruct dreams.

While the idea of direct brain-to-brain communication or fully immersive entertainment remains in the realm of science fiction for now, these foundational breakthroughs are paving the way for a future where the boundary between thought and technology becomes increasingly blurred.