A new generation of artificial intelligence tools, known as AI agents, is creating significant challenges for academic integrity. These tools can autonomously complete online assignments, from multiple-choice quizzes to essays, leaving educators and learning platforms struggling to respond as tech companies market their products directly to students.

The conflict highlights a growing divide between technology developers who promote AI as a learning aid and educators who see it as a powerful enabler of cheating. While companies deflect responsibility, teachers are left on the front lines of a rapidly evolving technological landscape in education.

Key Takeaways

- New AI agents from companies like Perplexity and OpenAI can log into learning platforms and complete homework, quizzes, and essays on behalf of students.

- Tech companies are actively marketing these tools to students, with some advertisements appearing to promote their use for completing assignments.

- Educators and learning management systems like Canvas are finding it nearly impossible to detect or block these sophisticated AI tools.

- A debate over responsibility has emerged, with tech firms suggesting academic integrity is an ethical issue for students, not a design problem for their products.

The New Frontier of Automated Cheating

The latest evolution in artificial intelligence is no longer just about generating text; it's about taking action. AI agents are tools designed to perform multi-step tasks online without direct human command. For students, this means an AI can log into their school portal, navigate to an assignment, complete it, and submit it for a grade.

Educators began raising alarms this fall after videos surfaced showing these agents in action. In one demonstration, an OpenAI agent accessed Canvas, a popular learning management system, to generate and submit an essay. In another, a Perplexity AI assistant successfully completed a quiz and a short writing assignment.

What Are AI Agents?

Unlike chatbots that respond to prompts, AI agents are designed to be autonomous. They can understand a goal, like "complete my biology homework," and then execute the necessary steps, such as logging in, finding the assignment, answering questions, and clicking the submit button. This automation makes their use for cheating much harder to trace than simply copying and pasting text from a chatbot.

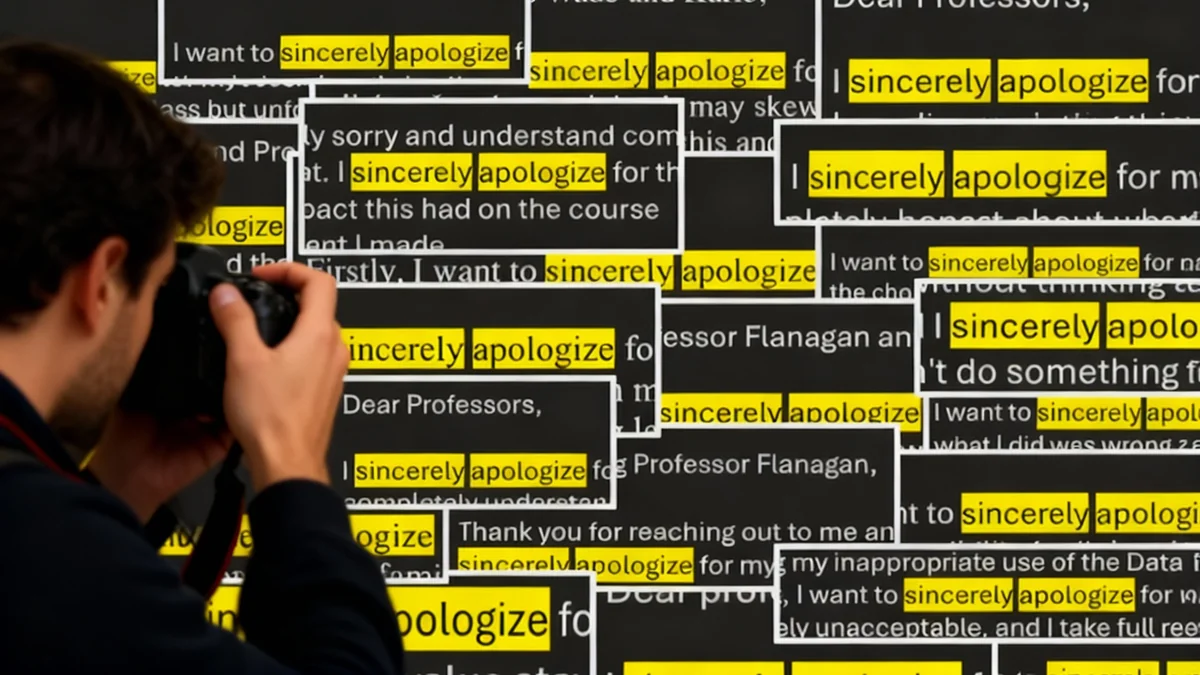

The sophistication of these tools has caught many by surprise. Yun Moh, a college instructional designer, demonstrated how an agent could even participate in a 'get to know you' discussion forum, introducing itself as him. "It actually introduced itself as me … so that kind of blew my mind," Moh stated, highlighting the agent's ability to convincingly mimic a student's online presence.

Tech Companies Target the Student Market

AI companies are not passively waiting for students to discover their tools. They are actively courting the education market with promotions, discounts, and referral programs. OpenAI has offered free access to its premium ChatGPT Plus service to college students, while Google and Perplexity provide similar yearlong free access to their advanced AI products.

Perplexity has taken a particularly aggressive approach. The company pays a $20 referral fee for each U.S. student who downloads its AI browser, Comet. Furthermore, some of its advertising campaigns have directly showcased the tool's ability to complete schoolwork.

One Facebook ad depicted a student discussing how peers use Comet's AI agent for multiple-choice homework. Another Instagram ad featured an actor telling students the browser could take quizzes for them. When a video of the agent doing exactly that went viral on X, Perplexity CEO Aravind Srinivas reposted it with the comment, "Absolutely don’t do this."

"Every learning tool since the abacus has been used for cheating. What generations of wise people have known since then is cheaters in school ultimately only cheat themselves."

This response reflects a common stance among tech companies: the ethical use of a tool is the user's responsibility, not the creator's. This position leaves educators feeling that companies are empowering students with unstoppable cheating machines while simultaneously blaming them for using them.

Educators and Platforms Caught in the Middle

The burden of policing this new technology is falling squarely on educators and the platforms they use. Instructure, the parent company of Canvas, serves a vast user base, making it a key battleground.

Canvas by the Numbers

Instructure's Canvas platform is used by tens of millions of students and educators, including at every Ivy League school and in an estimated 40% of U.S. K–12 districts, according to the company.

When instructional designer Yun Moh raised concerns with Instructure about AI agents impersonating students, the company's response suggested the problem was philosophical rather than technical. In a statement to Moh, the executive team indicated a reluctance to block AI, instead wanting to "create new pedagogically-sound ways to use the technology."

Brian Watkins, a spokesperson for Instructure, was more direct, stating the company "will never be able to completely disallow AI agents" and cannot control "tools running locally on a student’s device." This admission underscores the technical challenge: detecting an AI agent is extremely difficult because it can be programmed to mimic human behavior, avoiding patterns like submitting assignments too quickly.

Anna Mills, a college English instructor and member of the Modern Language Association’s AI task force, described the situation as "the wild west." She and other educators argue that guardrails put in place by AI companies are often inconsistent and easily bypassed. The task force has called on companies to give educators more control over how AI is used in their classrooms.

A Search for Accountability

As the debate intensifies, a clear solution remains elusive. Tech companies, educational platforms, and institutions seem to be engaged in a circular conversation about responsibility. OpenAI's vice president of education, Leah Belsky, has stated that AI should not be used as an "answer machine" and that the company is focused on helping students use AI to "enhance learning, not subvert it."

Instructure, for its part, advocates for a "collaborative effort" between tech companies, institutions, teachers, and students to define responsible AI use. However, with powerful tools already in the hands of millions of students, educators argue that these conversations are happening too late.

While companies and platforms discuss long-term strategies and ethical frameworks, teachers are facing the immediate reality in their classrooms. The deals have been signed and the products have been released, leaving educators to manage the consequences with little support and few effective tools to ensure academic integrity.